MONAI Stream SDK aims to equip experienced MONAI Researchers an Developers with the ability to build streaming inference pipelines while enjoying the familiar MONAI development experience and utilities.

MONAI Stream pipelines begin with a source component, and end with a sink component, and the two are connected by a series of filter components as shown below.

MONAI Stream SDK natively supports:

- a number of input component types including real-time streams (RTSP), streaming URL, local video files,

AJA Capture cards with direct memory access to GPU, and a Fake Source for testing purposes, - outputs components to allow the developer to view the result of their pipelines or just to test via Fake Sink,

- a number of filter types, including format conversion, video frame resizing and/or scaling, and most importantly a MONAI transform components that allows developers to plug-in MONAI transformations into the MONAI Stream pipeline.

- Clara AGX Developer Kit in dGPU configuration.

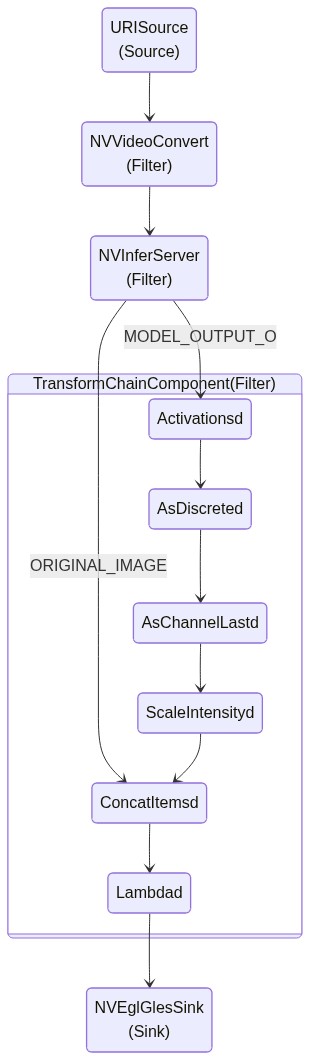

The diagram below shows a visualization of a MONAI Stream pipeline where a URISource is chained to video conversion,

inference service, and importantly to TransformChainComponent which allows MONAI transformations

(or any compatible callables that accept Dict[str, torch.Tensor]) to be plugged into the MONAI Stream pipeline. The results are then

vizualized on the screen via NVEglGlesSink.

In the conceptual example pipeline above, NVInferServer passes both the original image

as well as all the inference model outputs to the transform chain component. The developer may

choose to manipulate the two pieces of data separately or together to create the desired output

for display.

TransformChainComponent presents MONAI transforms

with torch.Tensor data containing a single frame of the video stream.

Implementationally, TransformChainComponent provides a compatibility layer between MONAI

and the underlying DeepStream SDK backbone,

so MONAI developers may be able to plug-in existing MONAI inference code into

DeepStream.

The codebase is currently under active development.

- Framework to allow MONAI-style inference pipelines for streaming data.

- Allows for MONAI chained transformations to be used on streaming data.

- Inference models can be used natively in MONAI or deployed via Triton Inference Server.

- Natively provides support for x86 and Clara AGX architectures

- with the future aim to allow developers to deploy the same code in both architectures with no changes.

To build a developer container for your workstation simply clone the repo and run the setup script as follows.

# clone the latest release from the repo

git clone -b <release_tag> https://github.com/Project-MONAI/MONAIStream

# start development setup script

cd MONAIStream

./start_devel.shWith the successful completion of the setup script, a container will be running containing all the necessary libraries

for the developer to start designing MONAI Stream SDK inference pipelines. The development however is limited to within

the container and the mounted volumes. The developer may modify Dockerfile.devel and start_devel.sh to suit their

needs.

To start developing within the newly created MONAI Stream SDK development container users may choose to use their favorite editor or IDE. Here, we show how one could setup VSCode on their local machine to start developing MONAI Stream inference pipelines.

- Install VSCode on your Linux development workstation.

- Install the Remote Development Extension pack and restart VSCode.

- In VSCode select the icon

of the newly installed Remote Development extension on the left.

of the newly installed Remote Development extension on the left. - Select "Containers" under "Remote Explorer" at the top of the dialog.

- Attach to the MONAI Stream SDK container by clicking the "Attach to Container" icon

on the container name.

on the container name.

The above steps should allow the user to develop inside the MONAI Stream container using VSCode.

MONAI Stream SDK comes with example inference pipelines. Here, we run a sample app to perform instrument segmentation in an ultrasound video.

Inside the development container perform the following steps.

- Download the ultrasound data and models in the container.

mkdir -p /app/data

cd /app/data

wget https://github.com/Project-MONAI/MONAIStream/releases/download/data/US.zip

unzip US.zip -d .

- Copy the ultrasound video to

/app/videos/Q000_04_tu_segmented_ultrasound_256.avias the example app expects.

mkdir -p /app/videos

cp /app/data/US/Q000_04_tu_segmented_ultrasound_256.avi /app/videos/.

-

Convert PyTorch or ONNX model to TRT engine.

a. To Convert the provided ONNX model to a TRT engine use:

cd /app/data/US/ /usr/src/tensorrt/bin/trtexec --onnx=us_unet_256x256.onnx --saveEngine=model.engine --explicitBatch --verbose --workspace=1000b. To convert the PyTorch model to a TRT engine use:

cd /app/data/US/ monaistream convert -i us_unet_jit.pt -o monai_unet.engine -I INPUT__0 -O OUTPUT__0 -S 1 3 256 256 -

Copy the ultrasound segmentation model under

/app/models/monai_unet_trt/1as our sample app expects.

mkdir -p /app/models/monai_unet_trt/1

cp /app/data/US/monai_unet.engine /app/models/monai_unet_trt/1/.

cp /app/data/US/config_us_trt.pbtxt /app/models/monai_unet_trt/config.pbtxt

- Now we are ready to run the example streaming ultrasound bone scoliosis segmentation pipeline.

cd /sample/monaistream-pytorch-pp-app

python main.py

- Website: https://monai.io/

- API documentation: https://docs.monai.io/projects/stream

- Code: https://github.com/Project-MONAI/MONAIStream

- Project tracker: https://github.com/Project-MONAI/MONAIStream/projects

- Issue tracker: https://github.com/Project-MONAI/MONAIStream/issues